Generative AI for Synthetic Data: Solving Data Scarcity & Privacy Challenges

Discover how Generative AI is revolutionizing data acquisition and management. Learn how synthetic data can overcome privacy concerns, data scarcity, and bias, accelerating AI development without compromising real-world integrity.

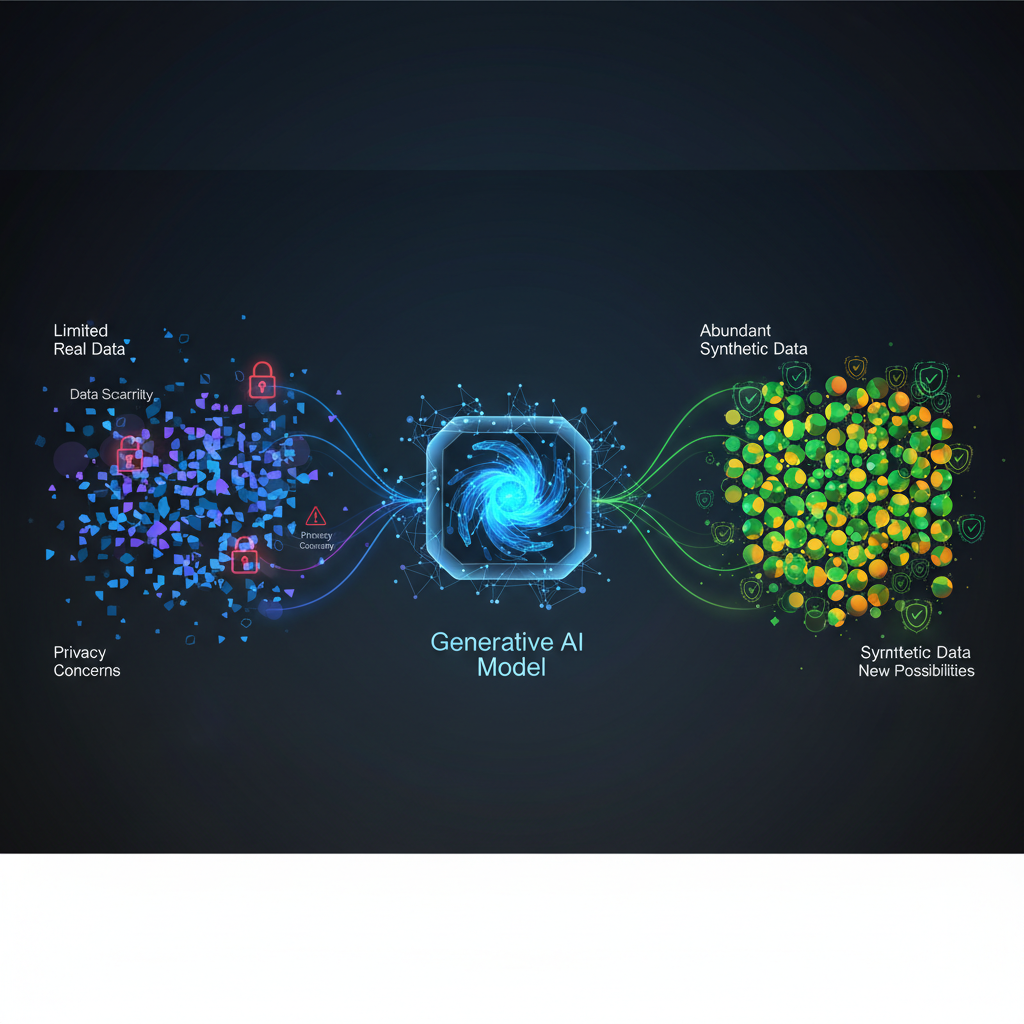

The insatiable appetite of Artificial Intelligence for data is well-documented. From training sophisticated neural networks to validating complex models, data is the lifeblood of modern AI. Yet, this dependency often clashes with harsh realities: data scarcity for rare events, stringent privacy regulations protecting sensitive information, and the pervasive biases embedded in real-world datasets. These challenges don't just slow down AI development; they can halt it entirely, leading to models that are either underperforming, non-compliant, or fundamentally unfair.

Enter Generative AI for Synthetic Data Generation – a transformative field poised to revolutionize how we acquire, manage, and utilize data in the age of AI. By leveraging cutting-edge generative models, we can create artificial datasets that meticulously mirror the statistical properties and patterns of real data, all while sidestepping the pitfalls of privacy breaches, data scarcity, and inherent biases. This isn't just about creating fake data; it's about crafting intelligent, statistically representative, and privacy-preserving alternatives that unlock new frontiers for AI innovation.

The Data Dilemma: Why Synthetic Data is Not Just a Luxury, But a Necessity

Before diving into the "how," let's reiterate the "why." The need for synthetic data stems from several critical, interconnected problems facing AI practitioners today:

- Data Scarcity: Imagine trying to train a medical AI to detect an extremely rare disease. Real-world cases are, by definition, few and far between. Similarly, identifying sophisticated financial fraud or industrial anomalies often means dealing with a severely imbalanced dataset where the "event of interest" is a minuscule fraction of the total data. Collecting enough diverse, high-quality examples for these scenarios is prohibitively expensive, time-consuming, or simply impossible.

- Privacy Concerns and Regulatory Hurdles: In an era of increasing data awareness, regulations like GDPR, HIPAA, and CCPA have placed strict controls on how sensitive personal data (healthcare records, financial transactions, biometric information) can be collected, stored, and used. Sharing or directly using such data for AI training, even internally, can be fraught with legal and ethical risks. Synthetic data offers a powerful alternative, allowing models to learn from realistic data patterns without ever touching actual sensitive records.

- Bias and Fairness: Real-world data is a reflection of society, and unfortunately, society often harbors biases. If a dataset used to train a hiring algorithm disproportionately represents certain demographics, the resulting AI might perpetuate or even amplify those biases, leading to unfair or discriminatory outcomes. Synthetic data provides a mechanism to actively mitigate bias by generating balanced representations of underrepresented groups or by correcting historical imbalances.

- Cost and Time of Data Acquisition: Beyond scarcity and privacy, the sheer logistical effort of collecting, annotating, and labeling vast quantities of data can be astronomical. Think of autonomous vehicle development, where millions of miles of driving data, meticulously labeled, are required. Synthetic data can significantly reduce this burden by generating diverse scenarios on demand.

The timing for synthetic data's rise couldn't be more opportune. The explosion of generative AI models – from the awe-inspiring image generation of Diffusion Models to the sophisticated text capabilities of Large Language Models – has demonstrated an unprecedented ability to create highly realistic and diverse data across various modalities. Coupled with the intensifying focus on data privacy and the growing "Data-Centric AI" movement (which emphasizes data quality and quantity over solely model architecture tweaks), synthetic data is rapidly transitioning from a niche research topic to a mainstream, indispensable tool.

The Engine Room: Key Generative AI Algorithms

At the heart of synthetic data generation are sophisticated generative models, each with its unique strengths and mechanisms. Understanding these is crucial for appreciating the power and potential of synthetic data.

Generative Adversarial Networks (GANs)

GANs, introduced by Ian Goodfellow in 2014, revolutionized generative modeling. They operate on a fascinating adversarial principle:

-

Mechanism: A GAN consists of two neural networks, a Generator and a Discriminator, locked in a zero-sum game.

- The Generator (G) takes random noise as input and tries to produce synthetic data that resembles real data.

- The Discriminator (D) receives both real data samples and synthetic data samples from the Generator. Its task is to distinguish between the two, classifying inputs as "real" or "fake."

- During training, the Generator tries to "fool" the Discriminator by producing increasingly realistic data, while the Discriminator tries to get better at identifying the Generator's fakes. This adversarial process drives both networks to improve until the Generator can produce data indistinguishable from real data, and the Discriminator can no longer tell the difference.

-

Strengths: GANs are renowned for generating incredibly realistic images and can capture complex, high-dimensional data distributions effectively.

-

Challenges: Training GANs can be notoriously unstable, often suffering from issues like "mode collapse" (where the Generator only produces a limited variety of samples, failing to capture the full diversity of the real data) or difficulty in convergence.

-

Variants for Synthetic Data:

- Conditional GANs (cGANs): Allow for targeted generation by conditioning the output on specific labels or attributes (e.g., generating a specific digit in MNIST).

- StyleGAN: Known for producing high-resolution, photorealistic images with disentangled latent representations, allowing for fine-grained control over generated features.

- Wasserstein GAN (WGAN): Addresses some of the training instability issues by using a different loss function (Wasserstein distance) that provides a smoother gradient.

- CTGAN (Conditional Tabular GAN): Specifically designed for generating synthetic tabular data, handling various data types (continuous, discrete, categorical) and complex correlations.

Variational Autoencoders (VAEs)

VAEs offer a probabilistic approach to generative modeling, providing a more stable training process compared to GANs.

-

Mechanism: A VAE consists of an Encoder and a Decoder.

- The Encoder maps an input data sample to a probability distribution (mean and variance) in a lower-dimensional latent space. Instead of directly outputting a point in latent space, it outputs parameters of a distribution, from which a latent vector is sampled. This introduces a controlled amount of randomness.

- The Decoder then takes a sample from this latent space and reconstructs the original data.

- The VAE is trained to minimize two objectives: the reconstruction loss (how well the decoder reconstructs the input) and a regularization term (Kullback-Leibler divergence) that forces the latent space distribution to be close to a simple prior distribution (e.g., a standard Gaussian). This regularization ensures that the latent space is well-structured and continuous, allowing for smooth interpolation and meaningful sampling.

-

Strengths: VAEs are known for their stable training, well-structured and interpretable latent spaces (useful for interpolation and data manipulation), and the ability to provide an explicit likelihood of generated samples.

-

Challenges: Generated samples can sometimes appear blurrier or less sharp than those produced by GANs, as VAEs typically optimize for average reconstruction rather than perceptual realism.

Diffusion Models (DMs)

Diffusion Models are currently at the forefront of generative AI, achieving state-of-the-art results in image, audio, and even video generation.

-

Mechanism: DMs work through a two-step process:

- Forward Diffusion Process: This process gradually adds Gaussian noise to an input data sample over several time steps, eventually transforming it into pure random noise. This process is fixed and doesn't involve learning.

- Reverse Diffusion Process: This is the learned part. A neural network is trained to reverse the forward process, step-by-step, by predicting and removing the noise at each stage. By starting with pure noise and iteratively denoising it using the learned network, the model can generate new, realistic data samples.

-

Strengths: DMs excel in generating high-quality and diverse samples, often surpassing GANs in realism and mode coverage. Their training is generally more stable.

-

Challenges: The generation process can be computationally intensive and slow due to the many sequential denoising steps, although significant research is focused on faster sampling methods (e.g., DDIM, Latent Diffusion Models).

-

Variants:

- Denoising Diffusion Probabilistic Models (DDPMs): The foundational architecture for many modern diffusion models.

- Latent Diffusion Models (LDMs): (e.g., Stable Diffusion) Perform the diffusion process in a compressed latent space rather than directly on high-resolution pixel space, significantly reducing computational cost and speeding up generation while maintaining high quality.

Other Notable Generative Models

- Autoregressive Models: Generate data sequentially, element by element, predicting the next based on previous ones. Excellent for sequential data like text (e.g., GPT models, which predict the next word) and audio. While powerful, they can be slow for high-dimensional data, and errors can compound over long sequences.

- Flow-based Models: Explicitly learn a bijective (invertible) mapping between a simple latent distribution (like a Gaussian) and the complex data distribution. This allows for exact likelihood computation and efficient inference, but designing complex invertible transformations for very high-dimensional data can be challenging.

Practical Applications: Where Synthetic Data Shines

The utility of synthetic data extends across virtually every industry touched by AI, offering solutions to long-standing data challenges.

Healthcare: Protecting Patients, Advancing Research

- Problem: Patient data is highly sensitive, subject to strict regulations (HIPAA, GDPR), and often scarce for rare conditions. This severely limits data sharing and collaborative research.

- Application: Generative models can create synthetic patient records (demographics, diagnoses, lab results, treatment plans) that maintain the statistical properties and correlations of real data without containing any actual patient identifiers. This enables:

- Training diagnostic AI: Developing models for disease detection without compromising privacy.

- Drug discovery: Simulating clinical trials or generating synthetic molecular structures.

- Medical imaging: Augmenting datasets for rare conditions or generating variations of existing scans to improve model robustness.

- Academic research: Allowing researchers to work with realistic data without navigating complex data access agreements.

Finance: Fraud Detection, Risk Management, and Market Simulation

- Problem: Financial transaction data is highly private. Fraud events are rare, leading to extremely imbalanced datasets that are difficult for traditional models to learn from. Simulating complex market behaviors is also challenging.

- Application:

- Fraud Detection: Generate synthetic transaction data, including diverse fraud patterns, to train more robust fraud detection models. This helps overcome the class imbalance problem where legitimate transactions vastly outnumber fraudulent ones.

- Risk Assessment: Create synthetic customer profiles and financial histories to test and improve credit scoring models or assess risk for new financial products.

- Market Simulation: Generate synthetic stock prices, trading volumes, or economic indicators to simulate various market scenarios, helping institutions develop more resilient trading strategies and risk management frameworks.

Autonomous Vehicles: Safe and Diverse Training Environments

- Problem: Training self-driving cars requires vast amounts of diverse driving data, including rare and dangerous "edge cases" (e.g., unusual weather, unexpected obstacles, specific traffic interactions). Collecting this in the real world is expensive, time-consuming, and risky.

- Application:

- Synthetic Scenarios: Generate synthetic images and videos of various weather conditions (rain, snow, fog), lighting changes (day, night, glare), and traffic scenarios (pedestrians crossing unexpectedly, complex intersections).

- Edge Case Generation: Create specific, challenging scenarios that are critical for safety but rarely occur in real-world driving. This allows for rigorous testing and training of perception, planning, and control systems in a safe, simulated environment.

- Data Augmentation: Augment existing real datasets with synthetic variations to improve the generalization capabilities of perception models.

E-commerce & Retail: Personalization and Inventory Optimization

- Problem: The "cold-start problem" for new products (lack of user interaction data), privacy concerns around customer purchase histories, and the need for personalized recommendations.

- Application:

- Recommendation Engines: Generate synthetic user behavior data, purchase histories, and product reviews to train recommendation systems, especially for new products or in regions with limited real data.

- Personalized Marketing: Create synthetic customer segments and their preferences to test and optimize marketing campaigns without using real customer data.

- Inventory Management: Simulate various demand patterns and supply chain disruptions using synthetic data to optimize inventory levels and reduce waste.

Cybersecurity: Robust Anomaly Detection

- Problem: Real-world cyberattack data is often scarce, proprietary, or difficult to obtain. New attack vectors emerge constantly, making it hard to train robust anomaly detection systems.

- Application: Generate synthetic network traffic data that includes various known and novel attack patterns (e.g., DDoS, phishing attempts, malware propagation). This allows security analysts to:

- Train intrusion detection systems (IDS) and security information and event management (SIEM) tools.

- Develop and test new security algorithms against a diverse range of threats.

- Simulate breach scenarios for incident response training.

Software Testing & Development: Quality Assurance

- Problem: Developers need realistic and diverse test data to thoroughly test software applications, identify bugs, and ensure robustness, often without access to production data.

- Application: Create synthetic user interface interactions, database entries, API requests, and system logs. This is invaluable for:

- Unit and integration testing: Generating specific edge cases or large volumes of data for performance testing.

- Database population: Creating realistic test databases for development and staging environments.

- Load testing: Simulating high user traffic with synthetic user behavior.

Data Augmentation & Bias Mitigation: Building Fairer AI

- Problem: Many real-world datasets are imbalanced (e.g., fewer examples of a minority class) or contain inherent societal biases that lead to unfair AI outcomes.

- Application: This is one of the most powerful applications. Generative models can be used to:

- Balance datasets: Generate synthetic samples for underrepresented classes or demographic groups to create a more balanced training set, leading to models that perform equally well across all groups.

- Mitigate bias: Actively generate data that corrects for known biases in the original dataset, promoting fairness and equity in AI systems. For example, generating more diverse facial images for face recognition systems or more diverse resume data for hiring algorithms.

The Cutting Edge: Recent Developments and Emerging Trends

The field of synthetic data generation is evolving rapidly, driven by advancements in generative AI and increasing practical demand.

- Advanced Evaluation Metrics: Moving beyond subjective assessment, researchers are developing sophisticated quantitative metrics to evaluate synthetic data quality. These include:

- Statistical Similarity: Metrics like Fréchet Inception Distance (FID) for images, Maximum Mean Discrepancy (MMD), and various statistical tests for tabular data to compare distributions.

- Privacy Metrics: Quantifying the privacy guarantees, often through differential privacy (DP) analysis, to ensure no real data points can be reconstructed or inferred.

- Utility Metrics: The ultimate test: how well do models trained solely on synthetic data perform when evaluated on real data? This directly measures the practical value of the synthetic data.

- Conditional Synthetic Data Generation: The ability to generate data based on specific conditions or attributes is becoming increasingly powerful. For example, generating a medical record for a 45-year-old female with diabetes, or a product image with specific features. This fine-grained control is crucial for targeted data augmentation and scenario generation.

- Multi-modal Synthetic Data: The next frontier is generating synthetic data that spans multiple modalities simultaneously. Imagine generating a video of a driving scenario along with the accompanying sensor data (Lidar, Radar) and a textual description of the events. This is vital for complex AI systems like autonomous vehicles or robotics.

- Foundation Models for Synthetic Data: Leveraging the immense power of large pre-trained generative models (like text-to-image diffusion models or large language models) and fine-tuning them for specific synthetic data generation tasks. These foundation models often provide a superior starting point, leading to higher quality and diversity with less task-specific data.

- Synthetic Data as a Service (SDaaS): The growing demand has led to the emergence of specialized platforms and companies offering synthetic data generation as a service. These providers abstract away the complexity of model training, making high-quality synthetic data accessible to a broader range of organizations.

- Ethical Considerations and "Synthetic Deepfakes": The very power that makes synthetic data so valuable also raises ethical concerns. The ability to generate highly realistic images, videos, and audio (often termed "deepfakes" when used maliciously) necessitates research into robust detection mechanisms, watermarking techniques, and ethical guidelines for deployment.

- Integration with Differential Privacy (DP): Combining generative models with differential privacy mechanisms offers a strong, mathematically provable guarantee of privacy. By injecting carefully calibrated noise during the training process or generation, DP ensures that the presence or absence of any single individual's data point does not significantly alter the synthetic dataset's statistical properties, thus protecting individual privacy at a fundamental level.

Navigating the Landscape: Challenges and Future Directions

While the promise of synthetic data is immense, several challenges remain that researchers and practitioners are actively addressing.

- The Fidelity-Diversity-Privacy Trilemma: Achieving perfect fidelity (how realistic the data is), maximum diversity (how well it covers the underlying data distribution), and strong privacy guarantees (how well it protects individual information) simultaneously is a complex trade-off. Improving one often comes at the expense of another. Finding the optimal balance for specific applications is an ongoing research area.

- Generalization to Out-of-Distribution Data: Models trained exclusively on synthetic data might struggle when encountering real-world data that deviates significantly from the synthetic distribution. Ensuring that synthetic data captures sufficient real-world variability, including edge cases, is crucial for robust model performance.

- Validation and Trust: How do we rigorously validate that synthetic data is truly representative, useful, and private enough for a given application? Establishing standardized, transparent, and quantifiable metrics for trustworthiness is paramount for widespread adoption. This includes not just statistical similarity but also utility for downstream tasks and provable privacy guarantees.

- Scalability: Generating vast quantities of high-quality synthetic data, especially for complex, high-dimensional datasets (e.g., high-resolution video, multi-modal sensor streams), can be computationally intensive and require significant infrastructure. Efficient generation methods and distributed computing are key to overcoming this.

- Interpretability: Understanding why a generative model produces certain synthetic data points or fails to capture specific real-world nuances can be challenging. Improving the interpretability of generative models would aid in debugging, improving quality, and building trust.

- Legal and Ethical Frameworks: As synthetic data becomes more prevalent, legal and ethical frameworks need to evolve to address issues of ownership, accountability, and potential misuse.

Conclusion

Generative AI for Synthetic Data Generation is no longer a futuristic concept; it is a present-day imperative and a powerful catalyst for innovation in AI. As the regulatory landscape tightens, data scarcity persists, and the demand for robust, unbiased, and fair AI systems grows, synthetic data will play an increasingly central role. It offers a unique pathway to democratize access to data, accelerate AI development across industries, and build more resilient and ethical machine learning models.

For AI practitioners and enthusiasts, understanding the mechanisms of generative models, exploring the diverse applications of synthetic data, and engaging with the ongoing challenges and ethical considerations is not just beneficial – it is essential. The ability to intelligently create data, rather than merely consume it, marks a significant paradigm shift, promising a future where data limitations are no longer insurmountable barriers, but rather opportunities for generative intelligence to flourish. The journey has just begun, and the potential for synthetic data to reshape the AI landscape is immense.