Generative AI: Revolutionizing Scientific Discovery and Drug Design

Explore how Generative AI, beyond LLMs and Diffusion Models, is transforming scientific research, drug development, and material science. Learn about its potential to accelerate innovation, reduce costs, and unlock new possibilities in complex domains.

The quest for new medicines, advanced materials, and a deeper understanding of the natural world has long been a cornerstone of human endeavor. Yet, these pursuits are often characterized by immense complexity, high costs, and protracted timelines. Imagine a world where we could design a molecule to precisely target a disease, synthesize a material with unprecedented properties, or even formulate novel scientific hypotheses with the speed and scale of artificial intelligence. This vision, once confined to science fiction, is rapidly becoming a reality thanks to the explosive growth of Generative AI.

While Large Language Models (LLMs) and Diffusion Models have captured public imagination with their ability to create text and images, their underlying principles – learning complex data distributions to generate novel, realistic samples – are proving transformative in the high-stakes domains of scientific discovery and drug design. This isn't just about incremental improvements; it's a paradigm shift, promising to accelerate innovation and unlock solutions to some of humanity's grandest challenges.

The Bottleneck of Traditional Discovery

Before diving into the AI revolution, it's crucial to understand the limitations of traditional scientific discovery. Drug development, for instance, is a notoriously arduous journey. It typically takes over a decade and billions of dollars to bring a single drug to market, with a staggering 90% failure rate in clinical trials. This process involves:

- Target Identification: Pinpointing the specific biological mechanisms or molecules (e.g., proteins, genes) involved in a disease.

- Lead Discovery: Screening millions of compounds (often through high-throughput screening) to find a "hit" that interacts with the target.

- Lead Optimization: Modifying the hit compound to improve its efficacy, selectivity, safety, and pharmacokinetic properties.

- Pre-clinical and Clinical Trials: Extensive testing in labs, animals, and human subjects.

Each step is iterative, labor-intensive, and often relies on intuition, trial-and-error, and exhaustive experimentation. Materials science faces similar hurdles, where discovering novel compounds with desired properties (e.g., conductivity, strength, catalytic activity) can involve synthesizing and testing countless permutations. Generative AI offers a powerful antidote to this inefficiency, enabling us to design rather than merely discover.

Generative AI for Molecular and Material Design

At the heart of this revolution is the ability of generative models to create novel molecular and material structures with specified properties. Instead of sifting through existing libraries, we can now instruct AI to invent new chemical entities from scratch.

The Toolkit of Generative Models

Several powerful generative AI architectures are being adapted for molecular and material design:

1. Variational Autoencoders (VAEs)

VAEs learn a compressed, continuous latent space representation of molecules. The encoder maps discrete molecular structures (e.g., SMILES strings, molecular graphs) into this latent space, and the decoder reconstructs them. The key advantage is that by sampling points from this latent space and feeding them to the decoder, we can generate novel, chemically valid molecules. Interpolating between two known molecules in the latent space can also yield intermediate structures.

Example: A VAE trained on a dataset of drug-like molecules can learn to represent their chemical features in a continuous vector. By slightly perturbing a point in this latent space and decoding it, we might generate a molecule that is structurally similar to an existing drug but with subtly altered properties, potentially improving its efficacy or reducing side effects.

2. Generative Adversarial Networks (GANs)

GANs consist of two competing neural networks: a generator and a discriminator. The generator creates synthetic molecular structures, aiming to fool the discriminator into believing they are real. The discriminator, in turn, learns to distinguish between real molecules from a training dataset and fake ones produced by the generator. This adversarial process pushes the generator to produce increasingly realistic and novel compounds.

Example: A GAN can be trained to generate SMILES strings (a linear notation for chemical structures). The generator might output CC(=O)Oc1ccccc1C(=O)O (aspirin), and the discriminator would evaluate its chemical validity and similarity to known drug molecules. Through repeated iterations, the generator learns to produce diverse and chemically plausible SMILES strings.

3. Graph Neural Networks (GNNs) with Generative Capabilities

Molecules are inherently graph-structured data, where atoms are nodes and bonds are edges. GNNs are uniquely suited to process this topological information. Generative GNNs can construct molecules atom-by-atom and bond-by-bond, ensuring chemical validity and respecting valency rules.

Example: A GNN-based generative model might start with a single carbon atom, then iteratively add bonds and other atoms (e.g., oxygen, nitrogen) based on learned probabilities, ensuring that each new atom forms valid connections with existing atoms. This allows for fine-grained control over the molecular construction process.

4. Diffusion Models

Emerging as a state-of-the-art technique, diffusion models generate data by iteratively denoising a random distribution. For molecules, this involves starting with a noisy representation (e.g., a randomized 3D conformation or a scrambled graph) and gradually refining it into a coherent, chemically valid structure. They excel at producing diverse and high-quality samples.

Example: A diffusion model could be trained on a dataset of 3D molecular conformations. It learns to reverse the process of adding noise to these conformations. To generate a new molecule, it starts with a completely random 3D arrangement of atoms and progressively denoises it, guided by the learned distribution of real molecules, until a stable and chemically sensible 3D structure emerges.

5. Transformer-based Models

Inspired by their success in natural language processing, Transformers are being adapted to molecular generation. By treating SMILES strings as sequences of tokens, or by using graph-based attention mechanisms, these models can learn complex dependencies and generate novel structures.

Example: ChemBERTa, a Transformer-based model pre-trained on millions of SMILES strings, can be fine-tuned for generative tasks. Given a partial SMILES string or a set of desired properties, it can complete or generate a full SMILES string representing a novel molecule.

Challenges in Molecular Generation

Despite these advancements, several challenges remain:

- Chemical Validity: Ensuring generated molecules adhere to fundamental chemical rules (e.g., valency, bond lengths).

- Synthesizability: A generated molecule is only useful if it can actually be synthesized in a lab. Predicting this is complex.

- Novelty and Diversity: Generating truly new molecules, not just slight variations of existing ones, while maintaining diversity to explore the chemical space broadly.

- Property Prediction: Accurately predicting desired properties (e.g., binding affinity, toxicity) for generated molecules, often requiring integration with predictive models.

Protein Structure Prediction and Design: Beyond AlphaFold

The scientific community was electrified by AlphaFold2's breakthrough in predicting protein structures with unprecedented accuracy. While AlphaFold is primarily a predictive model, its success has paved the way for generative protein design.

From Prediction to Generation

AlphaFold2 (and its successors like AlphaFold3, which predicts interactions with ligands, nucleic acids, and other proteins) has effectively solved the "protein folding problem" – predicting a protein's 3D structure from its amino acid sequence. This knowledge is invaluable, but the next frontier is generative protein design: creating novel protein sequences that fold into desired structures or perform specific functions.

Generative Models for Protein Design: Researchers are now using diffusion models, GANs, and even specialized Transformer architectures to:

- De novo protein design: Generate entirely new protein sequences that fold into a specified target shape or exhibit a desired catalytic activity.

- Enzyme engineering: Design enzymes with enhanced activity or specificity for industrial or therapeutic applications.

- Antibody design: Create novel antibodies with improved binding affinity or broader neutralization capabilities.

- Functional site generation: Design specific protein regions that interact with other molecules (e.g., drug targets).

Example: A diffusion model could be trained on a vast dataset of known protein structures. To design a new protein, one might specify a desired active site geometry. The diffusion model would then generate a protein backbone and sequence that is likely to fold into that geometry, effectively creating a custom enzyme or binding protein.

Automated Hypothesis Generation and Experimental Design

The most ambitious application of Generative AI in science moves beyond merely creating molecules or proteins to generating ideas, hypotheses, and experimental plans. This is where AI truly begins to augment the scientific method itself.

LLMs as Scientific Cognition Engines

Large Language Models, pre-trained on vast corpora of text, are proving adept at synthesizing information and generating coherent narratives. When fine-tuned on scientific literature, patents, and experimental data, LLMs can:

- Identify Research Gaps: By analyzing millions of papers, an LLM can pinpoint areas where current knowledge is sparse or contradictory.

- Propose Novel Hypotheses: Based on observed correlations and patterns in disparate datasets, an LLM could suggest new mechanisms of action for drugs, novel material properties, or unexplored biological pathways.

- Summarize and Synthesize: Condense vast amounts of information into actionable insights, helping researchers stay abreast of rapidly evolving fields.

- Design Experiments: Suggest specific experimental protocols, reagents, and controls to test a hypothesis, drawing from established methodologies.

Example: An LLM trained on immunology literature might analyze studies on autoimmune diseases and identify a recurring but under-investigated interaction between a specific cytokine and a cell receptor. It could then hypothesize that modulating this interaction could be a therapeutic target and propose a series of in vitro and in vivo experiments to validate this hypothesis, including specific cell lines, assays, and animal models.

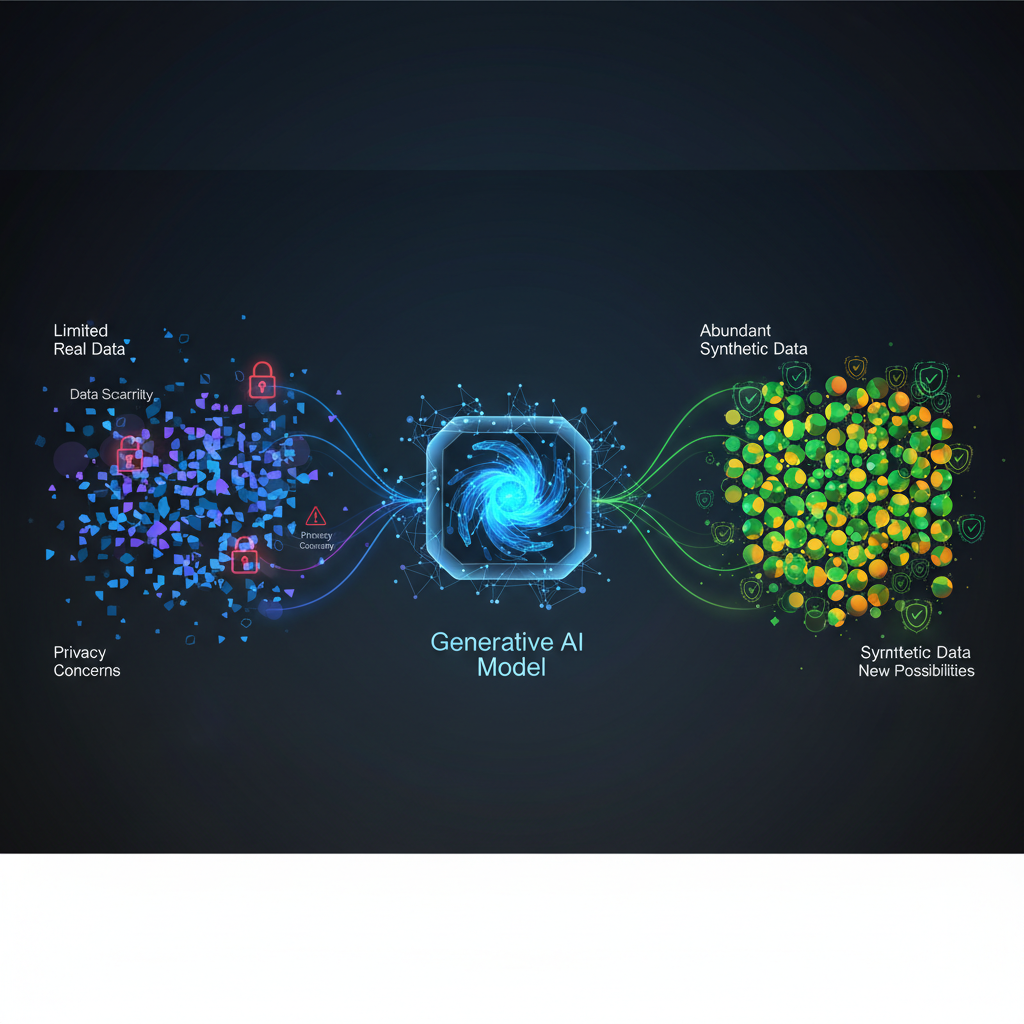

Active Learning and Reinforcement Learning for Iterative Discovery

Integrating generative models with active learning and reinforcement learning creates powerful feedback loops for iterative discovery.

- Active Learning: The AI proposes a small batch of promising molecules or experimental conditions. These are then synthesized/tested (either computationally via simulation or physically in a lab). The results are fed back to the generative model, which uses this new data to refine its understanding and generate even better candidates in the next iteration. This minimizes costly and time-consuming experiments by intelligently selecting the most informative ones.

- Reinforcement Learning (RL): RL agents can be trained to navigate the vast chemical or material space, with rewards given for generating molecules that meet specific property criteria. The agent learns a policy for constructing molecules that optimize for multiple objectives simultaneously (e.g., high binding affinity, low toxicity, good synthesizability).

Example: An RL agent could be tasked with designing a new catalyst. It would "play" a game where each move is adding an atom or bond. A simulation or a predictive model would then evaluate the catalytic activity of the resulting molecule, providing a reward signal. Over many iterations, the RL agent learns to design highly effective catalysts without explicit human guidance on intermediate steps.

The Rise of "Robot Scientists"

The ultimate vision is the "robot scientist" – an autonomous system that combines AI-driven hypothesis generation, automated robotic labs for experimentation, and AI for data analysis and iteration. Systems like "Adam" and "Eve" (developed at the University of Aberystwyth and Manchester) have already demonstrated the ability to autonomously design and conduct experiments, analyze results, and formulate new hypotheses in microbiology. While still in early stages, this foreshadows a future where discovery cycles are dramatically accelerated.

Practical Applications and Transformative Impact

The implications of Generative AI in scientific discovery are profound and far-reaching:

1. Drug Discovery and Development

- Accelerated Lead Optimization: Rapidly design and optimize drug candidates with improved potency, selectivity, and safety profiles. Companies like Insilico Medicine and Recursion Pharmaceuticals are using AI to identify novel targets and design new molecules, significantly compressing the early stages of drug discovery.

- Personalized Medicine: Generate drugs tailored to an individual's genetic makeup or disease markers, leading to more effective and safer treatments.

- Rare Diseases: Discover treatments for diseases that traditionally lack commercial incentive due to small patient populations.

- Antimicrobial Resistance: Design novel antibiotics or antiviral compounds to combat emerging infectious diseases and antibiotic-resistant superbugs.

2. Materials Science

- Novel Materials: Design materials with unprecedented properties, such as high-temperature superconductors, super-strong alloys, efficient catalysts for industrial processes, or advanced battery materials.

- Sustainable Materials: Discover biodegradable plastics, efficient CO2 capture materials, or non-toxic alternatives to hazardous chemicals.

- Functional Materials: Create materials with specific optical, electrical, or magnetic properties for advanced electronics, sensors, or quantum computing.

3. Agriculture and Environmental Science

- Crop Protection: Design new pesticides, herbicides, or fungicides that are more effective, environmentally friendly, and less prone to resistance development.

- Fertilizer Optimization: Develop novel compounds that enhance nutrient uptake in plants, reducing the need for excessive fertilization.

- Pollution Remediation: Design molecules or materials for breaking down pollutants, sequestering carbon, or filtering contaminants from water and air.

Why This Matters to AI Practitioners and Enthusiasts

This field is a vibrant intersection of cutting-edge AI research and real-world impact.

- Cutting-Edge Techniques: It's a playground for advanced deep learning, including novel architectures, multi-modal learning, and sophisticated training methodologies. You'll encounter techniques like conditional generation, constrained optimization, and active learning loops.

- Domain Adaptation: It showcases the incredible versatility of core ML concepts when adapted and specialized for highly complex, data-rich scientific domains. Understanding how to represent molecules as graphs or sequences, or how to embed biological knowledge into generative models, is a valuable skill.

- Ethical Considerations: The power to design new drugs or materials comes with significant ethical responsibilities. Questions around intellectual property, the potential for misuse (e.g., designing harmful compounds), and ensuring equitable access to AI-driven discoveries are paramount. This is a field where "responsible AI" is not just a buzzword but a critical design principle.

- Career Opportunities: The demand for AI researchers and engineers who can bridge the gap between machine learning and scientific disciplines (chemistry, biology, materials science) is exploding. Companies in biotech, pharma, and advanced materials are actively recruiting.

- Tangible Impact: Few fields offer the satisfaction of knowing your work could directly lead to new cures, sustainable technologies, or a deeper understanding of the universe. This is AI for good, with a capital G.

- Open Problems: The field is still nascent, with many unsolved challenges. Ensuring synthesizability, accurately predicting complex biological interactions, integrating diverse data types (genomics, proteomics, imaging), and scaling these methods to industrial levels are all active areas of research, offering fertile ground for new contributions.

Conclusion

Generative AI is not just another tool in the scientist's arsenal; it's a fundamental shift in how discovery happens. By moving from a trial-and-error paradigm to an intelligent design paradigm, we are poised to accelerate scientific progress at an unprecedented pace. From designing life-saving drugs and revolutionary materials to autonomously formulating and testing scientific hypotheses, the potential of Generative AI to reshape our world is immense. For AI practitioners and enthusiasts, this represents an exciting frontier – a chance to apply the most advanced machine learning techniques to problems that truly matter, making a tangible difference in human health, environmental sustainability, and our collective future. The era of AI-driven scientific discovery has only just begun, and its most profound breakthroughs are yet to be unveiled.