RAG Beyond Basics: Advanced Techniques for Robust LLM Applications

Explore the evolution of Retrieval-Augmented Generation (RAG) from a basic concept to an indispensable technique. This deep dive uncovers advanced strategies and optimizations to build reliable, grounded, and up-to-date LLM applications, moving beyond simple information lookup.

The era of Large Language Models (LLMs) has ushered in unprecedented capabilities for natural language understanding and generation. From writing poetry to drafting code, these models have demonstrated a remarkable grasp of language. However, their power comes with inherent limitations: they can "hallucinate" facts, their knowledge is often static and outdated, and they lack domain-specific expertise unless extensively fine-tuned. Enter Retrieval-Augmented Generation (RAG) – a paradigm that has rapidly evolved from a research concept to an indispensable technique for building reliable, grounded, and up-to-date LLM applications.

While the basic premise of RAG – fetching external information to augment an LLM's prompt – is now widely understood, the true power and complexity lie in the advanced techniques that push RAG "beyond basic augmentation." This deep dive will explore the sophisticated strategies, optimizations, and considerations that transform RAG from a simple lookup mechanism into a robust, intelligent information synthesis system.

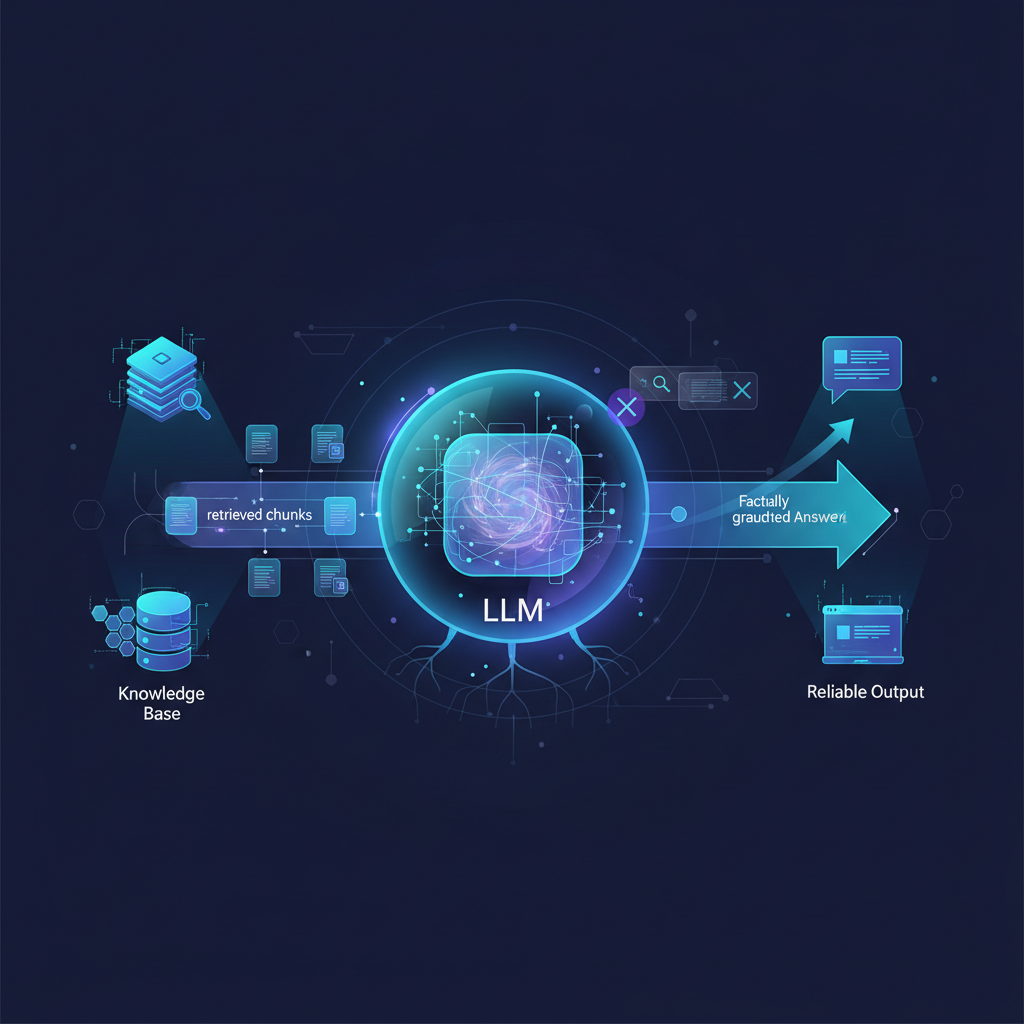

The Foundation: Understanding Basic RAG

Before delving into advanced concepts, let's briefly recap the core RAG pipeline. Imagine an LLM as a brilliant but isolated scholar. It knows a lot from its initial training, but it can't access new books or specialized journals. RAG provides this access.

The basic RAG process involves three main steps:

- User Query: A user asks a question or provides a prompt.

- Retrieval: This query is used to search an external knowledge base (e.g., a collection of documents, articles, databases). Typically, the query is converted into a numerical representation (an "embedding") using an embedding model. This embedding is then used to find semantically similar document chunks within a vector database. The most relevant

kchunks are retrieved. - Augmentation & Generation: The retrieved chunks are combined with the original user query to form an augmented prompt. This enriched prompt is then fed to the LLM, which generates a response grounded in the provided context.

This fundamental approach significantly mitigates hallucinations and allows LLMs to access current or proprietary information. However, its effectiveness is heavily dependent on the quality of retrieval and the LLM's ability to utilize the provided context effectively. This is where advanced RAG techniques come into play.

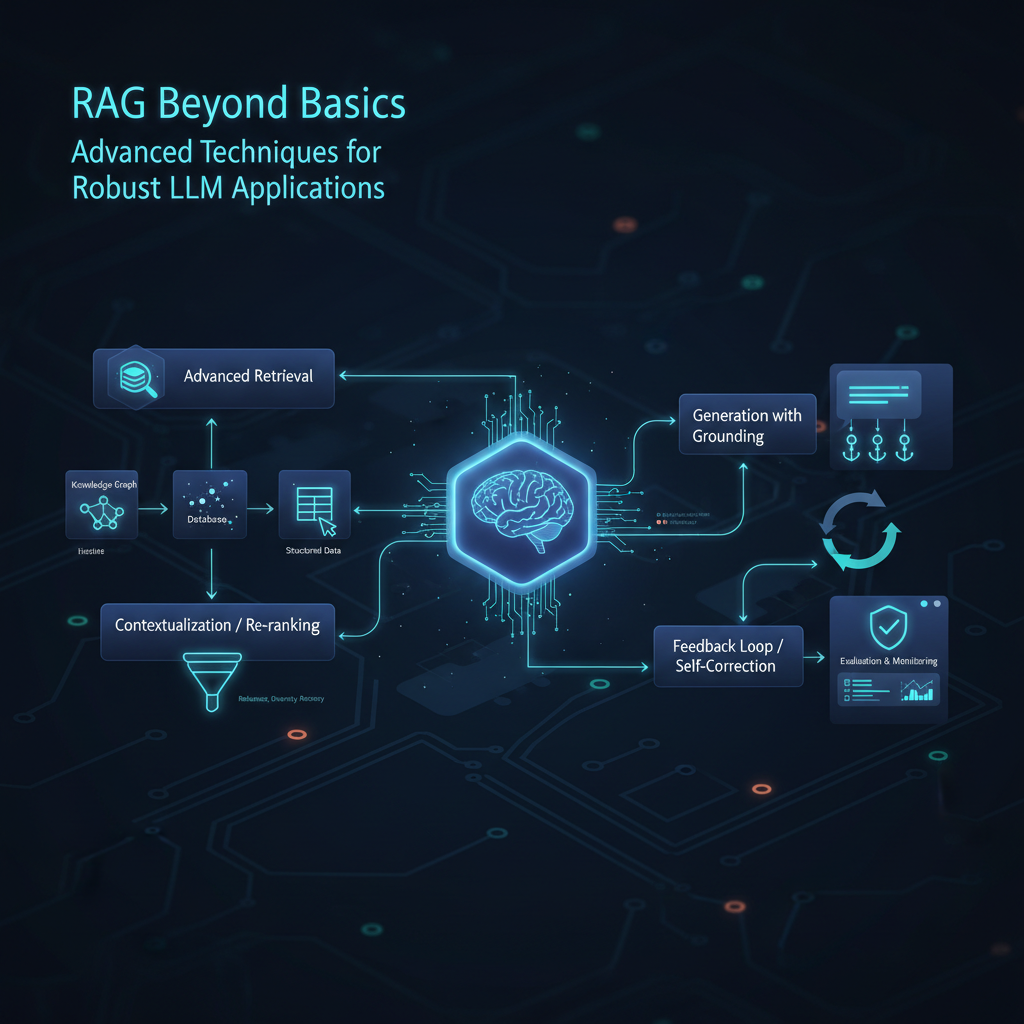

Beyond Basic RAG: Advanced Techniques & Optimization

The "beyond basic" aspect of RAG focuses on refining every stage of this pipeline, from how we prepare our knowledge base to how the LLM processes and generates its final answer.

Advanced Retrieval Strategies: Finding the Needle in the Haystack

The quality of the retrieved context is paramount. "Garbage in, garbage out" applies emphatically here. Advanced retrieval strategies aim to ensure that the most relevant, comprehensive, and non-redundant information is presented to the LLM.

Pre-Retrieval Optimization: Enhancing the Search Query

Instead of directly embedding the user's raw query, these techniques manipulate or enrich the query to improve retrieval accuracy.

- Query Transformation/Rewriting: Complex user queries can be ambiguous or too broad. An LLM can be employed to rephrase, expand, or break down the original query into several sub-queries. For example, if a user asks, "What are the latest advancements in quantum computing and their implications for cryptography?", an LLM could rewrite it into:

- "Recent breakthroughs in quantum computing hardware."

- "New quantum algorithms for cryptography."

- "Impact of quantum computing on current encryption standards." Each sub-query can then be used for retrieval, with the results aggregated.

- HyDE (Hypothetical Document Embedding): This ingenious technique involves using an LLM to generate a hypothetical answer to the user's query before retrieval. This hypothetical answer, often longer and more descriptive than the original query, is then embedded. The intuition is that an embedding of a full, relevant document (even a hypothetical one) will be semantically closer to actual relevant documents in the vector store than an embedding of a short, potentially vague query.

- Example Prompt for HyDE:

The generated

You are an expert answering questions about [domain]. Generate a concise, hypothetical answer to the following question: Question: {user_query} Hypothetical Answer:You are an expert answering questions about [domain]. Generate a concise, hypothetical answer to the following question: Question: {user_query} Hypothetical Answer:Hypothetical Answeris then embedded and used for vector search.

- Example Prompt for HyDE:

- Multi-hop Retrieval: For questions requiring synthesis from multiple, disparate pieces of information or inferential steps, a single retrieval might not suffice. Multi-hop RAG involves an iterative process where an initial retrieval answers part of the question or identifies a sub-question. The LLM then uses this partial answer to formulate a new query for a subsequent retrieval step, chaining together information to build a complete answer. This mimics human reasoning processes.

- Metadata Filtering: Modern vector databases allow for combining semantic search with structured metadata filtering. This is crucial for precision. For instance, if you're searching for "documents about Q3 earnings" but only want those published after a certain date or by a specific department, metadata filters can narrow down the semantic search results, ensuring both relevance and compliance with specific criteria.

Post-Retrieval Optimization: Re-ranking for Precision

Once an initial set of k documents is retrieved, not all are equally relevant or useful. Re-ranking techniques refine this set to present the most pertinent information to the LLM.

- Cross-Encoder Re-ranking: While bi-encoder models (like most embedding models) are efficient for initial retrieval, cross-encoder models offer superior relevance scoring. A cross-encoder takes both the query and a document chunk as input simultaneously and outputs a single relevance score. This allows for a more nuanced understanding of their interaction. Because they are computationally more expensive, they are typically used to re-rank a smaller set of already retrieved candidates, rather than the entire knowledge base.

- LLM-based Re-ranking: Leveraging the LLM's own understanding, it can be prompted to evaluate the relevance of the retrieved chunks to the original query. The LLM can assign scores, reorder, or even summarize the chunks to prioritize the most useful ones.

- Example Prompt for LLM Re-ranking:

Given the user query: "{user_query}" And the following document chunks: [ {chunk_1}, {chunk_2}, ... {chunk_N} ] Please rank these chunks from most relevant to least relevant for answering the query. Provide a brief justification for your top 3 choices.Given the user query: "{user_query}" And the following document chunks: [ {chunk_1}, {chunk_2}, ... {chunk_N} ] Please rank these chunks from most relevant to least relevant for answering the query. Provide a brief justification for your top 3 choices.

- Example Prompt for LLM Re-ranking:

- Diversity-aware Re-ranking: Sometimes, the top

kretrieved results might be highly similar or redundant. Diversity-aware re-ranking aims to ensure that the selected chunks cover different aspects of the query, providing a broader and more comprehensive context to the LLM. Maximum Marginal Relevance (MMR) is a common algorithm for this, balancing relevance with novelty.

Advanced Chunking Strategies: Preparing the Knowledge Base

The way documents are broken down into "chunks" for indexing significantly impacts retrieval quality. Simple fixed-size chunking often leads to context fragmentation or irrelevant information.

- Semantic Chunking: Instead of arbitrary character limits, semantic chunking aims to group text based on its natural semantic boundaries. This could involve using NLP techniques to identify topic shifts, paragraph boundaries, or even using an LLM to determine coherent units of information. This ensures that each chunk represents a complete thought or concept.

- Hierarchical Chunking: This strategy involves creating chunks of varying sizes. Smaller chunks might be good for precise keyword matching, while larger chunks provide broader context. During retrieval, you might first retrieve small chunks and then, if necessary, expand to their larger "parent" chunks for more context.

- Parent Document Retrieval: A popular and effective technique. Small, semantically coherent chunks are indexed and retrieved. However, when a small chunk is identified as relevant, the entire larger document (or a larger section it came from) that contains it is passed to the LLM. This provides the LLM with the specific relevant information and the broader context, preventing loss of nuance.

Adaptive Generation/Prompting: Smarter LLM Interaction

Once the optimal context is retrieved, how the LLM uses it is the final critical step.

- Contextual Compression: LLMs have token limits. Simply concatenating all retrieved chunks can quickly exceed these limits, especially for complex queries or verbose documents. Contextual compression uses an LLM to summarize or extract key information from the retrieved documents before sending them to the final generation LLM. This ensures that only the most critical information is passed, optimizing token usage and reducing noise.

- Example Prompt for Compression:

Summarize the following document chunk, focusing on information relevant to the query: "{user_query}" Document: {retrieved_chunk} Summary:Summarize the following document chunk, focusing on information relevant to the query: "{user_query}" Document: {retrieved_chunk} Summary:

- Example Prompt for Compression:

- Iterative RAG / Self-Correction: This is a dynamic approach where the LLM doesn't just generate an answer once. After an initial generation, the LLM might be prompted to evaluate its own answer against the retrieved context. If it identifies gaps, inconsistencies, or areas where more information is needed, it can formulate a new query and initiate another retrieval step, refining its answer iteratively. This mimics a researcher who reads an article, identifies further questions, and then seeks out more sources.

- Chain-of-Thought (CoT) RAG: By combining CoT prompting with RAG, we can guide the LLM to reason step-by-step through the retrieved context. Instead of just asking for a direct answer, we prompt the LLM to first analyze the documents, identify key facts, synthesize information, and then formulate its response. This makes the LLM's reasoning more transparent and its answers more grounded.

- Example CoT RAG Prompt Structure:

You are an expert answering questions based on provided documents. User Query: {user_query} Retrieved Documents: {document_1} {document_2} ... Step 1: Identify all relevant facts from the documents that pertain to the user query. Step 2: Synthesize these facts to form a coherent understanding of the topic. Step 3: Based on your synthesis, provide a comprehensive answer to the user query.You are an expert answering questions based on provided documents. User Query: {user_query} Retrieved Documents: {document_1} {document_2} ... Step 1: Identify all relevant facts from the documents that pertain to the user query. Step 2: Synthesize these facts to form a coherent understanding of the topic. Step 3: Based on your synthesis, provide a comprehensive answer to the user query.

- Example CoT RAG Prompt Structure:

Evaluation Metrics for RAG Systems

Building advanced RAG systems requires rigorous evaluation. Unlike traditional NLP tasks, RAG evaluation needs to assess both the retrieval component and the generation component, as well as their interplay.

- Retrieval Metrics:

- Recall: What percentage of all truly relevant documents were retrieved?

- Precision: What percentage of the retrieved documents were actually relevant?

- MRR (Mean Reciprocal Rank): Measures the effectiveness of ranking, giving higher scores if the first relevant document appears earlier in the list.

- NDCG (Normalized Discounted Cumulative Gain): A more sophisticated ranking metric that considers the graded relevance of documents and their position.

- Generation Metrics:

- Faithfulness (or Groundedness): Is the generated answer supported only by the retrieved context? This is crucial for preventing hallucinations.

- Answer Relevance: Is the generated answer directly addressing the user's query?

- Coherence: Is the answer well-structured and easy to understand?

- Completeness: Does the answer cover all aspects of the query based on the available context?

- End-to-End Metrics:

- Human Evaluation: The gold standard, though expensive and time-consuming. Human judges assess the quality of the final answer based on relevance, accuracy, completeness, and fluency.

- Automated RAG Evaluation Frameworks: Tools like Ragas, TruLens, and ARES provide programmatic ways to evaluate various aspects of RAG systems, often using LLMs themselves to judge faithfulness, relevance, and groundedness. This allows for faster iteration and continuous integration.

Productionalization Challenges

Deploying RAG systems in real-world scenarios introduces several engineering and operational challenges:

- Scalability: Handling vast knowledge bases (billions of documents) and high query throughput requires robust vector databases and efficient indexing strategies.

- Latency: Retrieval and generation must be fast enough to provide a good user experience. Optimizing embedding generation, vector search, and LLM inference times is critical.

- Cost Optimization: Managing API calls to LLMs and embedding models, as well as vector database infrastructure costs, requires careful resource management and potentially leveraging open-source alternatives.

- Data Freshness & Index Updates: Knowledge bases are dynamic. Strategies for incrementally updating vector indexes, handling document deletions, and ensuring that the LLM always accesses the most current information are essential.

- Security & Access Control: For sensitive data, RAG systems must integrate with existing access control mechanisms, ensuring that users only retrieve information they are authorized to see. This often involves filtering retrieved chunks based on user permissions.

Key Tools and Frameworks

The RAG ecosystem is rapidly maturing, with several powerful tools simplifying its implementation:

- Vector Databases:

- Cloud-native: Pinecone, Weaviate, Qdrant, Milvus (often self-hosted or managed cloud).

- Local/In-memory: Chroma, FAISS (for smaller scale or development). These databases are optimized for storing and querying high-dimensional vectors efficiently.

- Orchestration Frameworks:

- LangChain: Provides abstractions and components for building complex LLM applications, including RAG pipelines, agents, and chains.

- LlamaIndex: Specifically designed for data ingestion, indexing, and querying external data sources to augment LLMs, with a strong focus on RAG.

- Embedding Models:

- Proprietary: OpenAI Embeddings, Cohere Embeddings (often high quality but with API costs).

- Open-source: Hugging Face

sentence-transformerslibrary (offers a wide range of models likeall-MiniLM-L6-v2,BAAI/bge-large-en-v1.5, etc., allowing for self-hosting).

- Large Language Models (LLMs):

- Proprietary: GPT-3.5/4 (OpenAI), Claude (Anthropic), Gemini (Google).

- Open-source: Llama 2/3 (Meta), Mistral (Mistral AI), Falcon (TII), Phi-3 (Microsoft) – these can be self-hosted or accessed via APIs.

Practical Applications

The advanced RAG techniques discussed here unlock a new generation of intelligent applications:

- Enterprise Search & Q&A: Imagine a customer support chatbot that can instantly pull the most up-to-date product specifications, troubleshooting guides, and customer history from internal databases to provide precise, personalized assistance. Or an HR bot that accurately answers policy questions based on the latest company handbook.

- Personalized Content Generation: A marketing tool that generates blog posts or ad copy grounded in specific campaign data, customer demographics, and brand guidelines, ensuring factual accuracy and tone consistency.

- Domain-Specific AI Assistants: Medical professionals using an AI assistant that synthesizes information from the latest research papers, patient records, and drug databases to aid in diagnosis or treatment planning. Financial analysts leveraging an AI to generate reports based on real-time market data and company filings.

- Fact-Checking & Information Verification: An LLM-powered tool that can automatically verify claims in news articles or social media posts by cross-referencing against a curated database of authoritative sources.

- Code Generation & Explanation: Developers using an AI assistant that not only generates code but also provides explanations and examples directly from their project's codebase, internal documentation, and specific API references.

Conclusion

Retrieval-Augmented Generation has moved beyond a theoretical concept to become a cornerstone of practical LLM deployment. By strategically enhancing retrieval, optimizing context preparation, and intelligently guiding LLM generation, we can overcome many of the inherent limitations of foundational models.

The journey "beyond basic augmentation" is an active and exciting frontier. It demands a blend of NLP expertise, information retrieval knowledge, and robust engineering practices. For AI practitioners and enthusiasts, mastering these advanced RAG techniques is not just about staying current; it's about building the next generation of reliable, accurate, and truly intelligent AI applications that can seamlessly integrate with the dynamic, ever-evolving world of information. As LLMs continue to grow in capability, their ability to effectively leverage external knowledge through sophisticated RAG will remain a critical differentiator in their real-world impact.