AI in QA: Revolutionizing Testing with Generative AI & Self-Healing Tests

Explore how Artificial Intelligence is fundamentally reshaping Quality Assurance in software development. This post delves into the transformative power of AI-powered generative test case generation and self-healing tests, moving QA beyond manual bottlenecks to proactive, automated efficiency in CI/CD pipelines.

The landscape of software development is undergoing a profound transformation, driven by the relentless pursuit of speed, quality, and efficiency. Continuous Integration, Continuous Delivery, and Continuous Deployment (CI/CD) have become the bedrock of modern software engineering, demanding that every stage of the development lifecycle be as automated and streamlined as possible. Yet, one critical area has often lagged: Quality Assurance (QA). Traditional QA processes, heavily reliant on manual effort and prone to bottlenecks, struggle to keep pace with rapid release cycles.

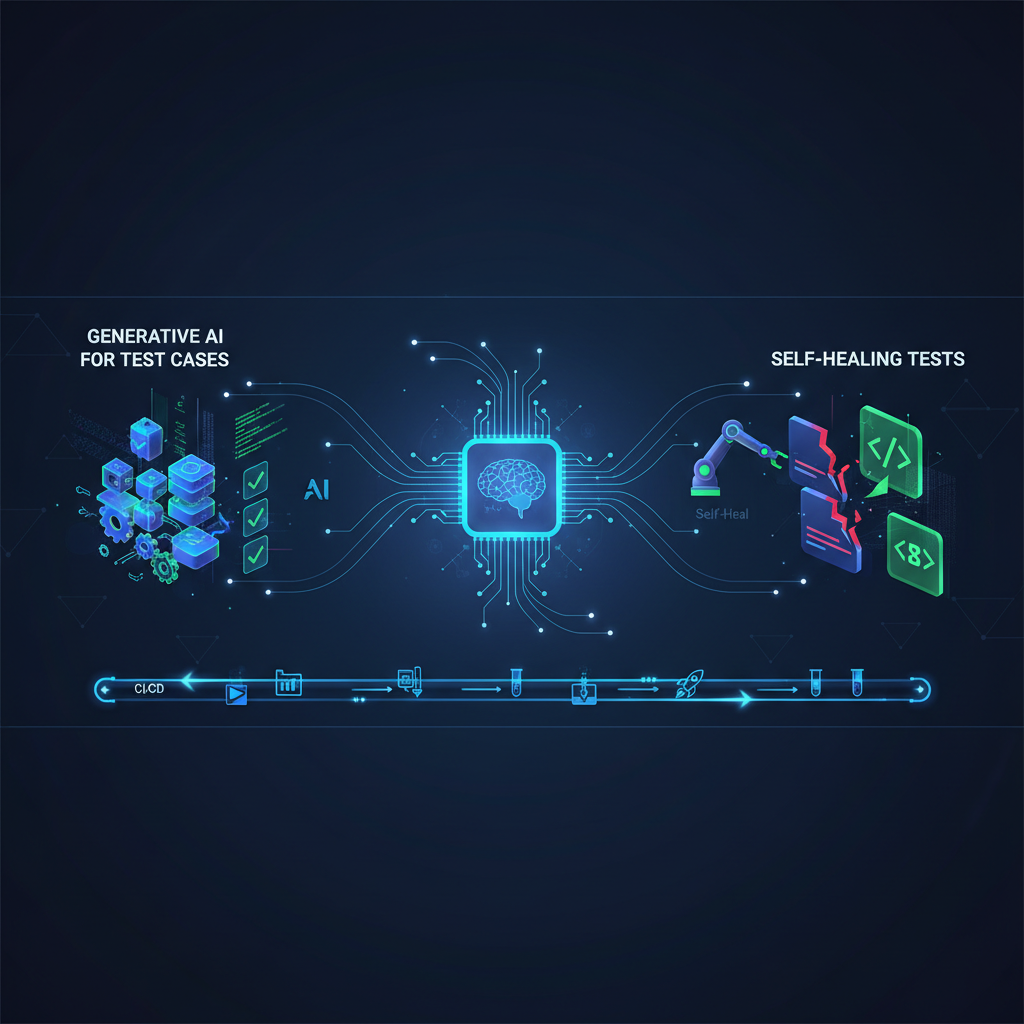

Enter Artificial Intelligence. AI is not just optimizing existing QA practices; it's fundamentally reshaping them. We are moving beyond simple test execution to a future where AI proactively understands, generates, and maintains our test suites. This blog post delves into two interconnected, high-impact advancements at the forefront of this revolution: AI-Powered Generative Test Case Generation and Self-Healing Tests. Together, these innovations are paving the way for truly intelligent, autonomous, and resilient continuous testing.

The Bottleneck of Manual Testing: Why AI is Essential

Before diving into the solutions, let's understand the challenge. Manual test case generation is:

- Time-Consuming: Writing detailed test cases, especially for complex systems, can take days or weeks.

- Prone to Human Error and Bias: Testers might inadvertently focus on "happy paths," miss edge cases, or overlook negative scenarios.

- Incomplete: It's virtually impossible for humans to envision every possible interaction or system state.

- Repetitive: Much of the process involves translating requirements into structured test steps, which can be automated.

Similarly, test maintenance is a silent killer of automation ROI:

- Fragile Locators: UI changes, even minor ones, frequently break automated tests, leading to "flaky" tests.

- High Maintenance Overhead: Teams spend significant time fixing broken tests instead of writing new ones or exploring new features.

- Slow Feedback Loops: Broken tests block CI/CD pipelines, delaying releases and eroding trust in automation.

These challenges highlight a clear need for intelligent automation. AI offers the promise of overcoming these hurdles, making QA a true enabler of continuous delivery rather than a bottleneck.

AI-Powered Generative Test Case Generation: From Requirements to Robust Tests

Imagine a world where test cases write themselves. This is the promise of AI-powered generative test case generation. It leverages advanced machine learning models to automatically create new, relevant, and diverse test cases based on various inputs, significantly accelerating the test development process and enhancing coverage.

How it Works: A Technical Deep Dive

The process typically involves feeding various data sources into sophisticated AI models, which then interpret, analyze, and synthesize this information to produce actionable test artifacts.

1. Input Data Sources: The Fuel for Generation

The quality of generated tests heavily relies on the richness and diversity of the input data.

- Requirements/User Stories: These are often the primary source. Natural language descriptions like "As a user, I want to add items to my cart so I can purchase them later" provide the core functionality.

- Existing Test Cases: A corpus of previously written, successful tests serves as a valuable learning resource. AI can learn patterns, common test structures, and effective testing strategies from these examples.

- Application Logs/Telemetry: Runtime data, error logs, and user interaction patterns from production or staging environments offer insights into real-world usage, common failure points, and critical user journeys.

- UI/API Specifications: Formal specifications like OpenAPI/Swagger definitions for APIs, or UI component libraries and wireframes, provide structured information about system interfaces and expected behaviors.

- Codebase Analysis: Static code analysis tools can provide insights into function signatures, data structures, control flow, and potential vulnerabilities, helping AI understand the internal workings of the application.

2. AI Techniques Employed: The Brains Behind the Tests

Different AI techniques contribute to various aspects of test generation, often working in concert.

-

Natural Language Processing (NLP) / Large Language Models (LLMs): These are the workhorses for understanding human-readable inputs and generating human-readable outputs.

- Requirement Understanding: LLMs excel at parsing user stories and requirements. They can identify key entities (e.g., "user," "product," "cart"), actions (e.g., "add," "remove," "checkout"), and constraints (e.g., "maximum 5 items," "minimum order value"). For example, from "User can add up to 10 items to their cart," an LLM can infer boundary conditions like 0, 1, 9, 10, and 11 items.

- Test Scenario Generation: Based on the parsed requirements, LLMs can generate diverse test scenarios. This includes:

- Happy Path: "User successfully adds an item to cart and proceeds to checkout."

- Edge Cases: "User attempts to add the 11th item to a cart already containing 10 items."

- Negative Cases: "User attempts to checkout with an empty cart."

- Boundary Conditions: "User adds 0, 1, 9, 10, and 11 items to the cart."

- Test Data Generation: LLMs can suggest realistic and valid test data, such as valid email formats, plausible product names, or boundary values for quantities (e.g., for an age field, suggesting 0, 1, 18, 65, 100, 120).

- Test Script Generation: For Behavior-Driven Development (BDD) frameworks, LLMs can translate scenarios into Gherkin's

Given-When-Thenstatements. More advanced LLMs, especially those fine-tuned for code generation, can even produce skeleton code for specific automation frameworks like Selenium, Playwright, or Cypress, including locator strategies and interaction methods.

Example: Input: "As a customer, I want to log in with my credentials to access my account." LLM Output (Scenario):

gherkinScenario: Successful login Given I am on the login page When I enter valid username "[email protected]" and password "Password123!" And I click the "Login" button Then I should be redirected to the "Dashboard" page And I should see a welcome message "Welcome, testuser!" Scenario: Invalid password login attempt Given I am on the login page When I enter valid username "[email protected]" and invalid password "WrongPass" And I click the "Login" button Then I should see an error message "Invalid credentials." And I should remain on the "Login" pageScenario: Successful login Given I am on the login page When I enter valid username "[email protected]" and password "Password123!" And I click the "Login" button Then I should be redirected to the "Dashboard" page And I should see a welcome message "Welcome, testuser!" Scenario: Invalid password login attempt Given I am on the login page When I enter valid username "[email protected]" and invalid password "WrongPass" And I click the "Login" button Then I should see an error message "Invalid credentials." And I should remain on the "Login" page -

Reinforcement Learning (RL): RL agents are particularly effective for exploratory testing and optimizing test sequences.

- Exploratory Testing Simulation: An RL agent can be trained to interact with an application like a human tester. It explores different states, clicks buttons, fills forms, and observes the application's responses. The "reward" function can be designed to incentivize discovering new paths, uncovering bugs, or maximizing code coverage. This allows for the generation of tests for previously uncovered areas.

- Adaptive Test Selection: RL can learn which types of tests (e.g., specific modules, data combinations) are most effective at finding bugs in particular parts of the application. It can then prioritize generating similar tests or focusing its exploratory efforts on those areas.

-

Generative Adversarial Networks (GANs) / Variational Autoencoders (VAEs): These models excel at generating synthetic data that mimics the characteristics of real data.

- Synthetic Data Generation: For complex data types like images (e.g., for visual testing of AI models), complex JSON structures (e.g., for API testing), or diverse user profiles, GANs/VAEs can generate realistic synthetic data. This is crucial when real data is scarce, sensitive, or too uniform to thoroughly test edge cases.

-

Graph Neural Networks (GNNs): GNNs are powerful for modeling relationships and structures.

- Application State Modeling: GNNs can represent an application's UI states, API endpoints, or user flows as a graph, where nodes are states/pages/endpoints and edges are actions/transitions. By analyzing this graph, GNNs can identify critical paths, dead ends, and complex multi-step scenarios, generating tests that ensure comprehensive traversal and validation of these paths.

Practical Applications: Tangible Benefits

- Accelerated Test Development: Drastically reduces the time and effort testers spend manually writing new test cases, freeing them for more complex tasks like test strategy and exploratory testing.

- Increased Test Coverage: AI can identify and generate tests for edge cases, negative scenarios, and boundary conditions that human testers might overlook, leading to more comprehensive test suites.

- Reduced Human Bias: By analyzing data objectively, AI can generate a more diverse and unbiased set of tests, avoiding the common pitfall of human testers focusing solely on "happy paths" or familiar scenarios.

- Shift-Left Testing: Test cases can be generated much earlier in the development cycle, even from preliminary requirements or design mockups, enabling earlier bug detection and reducing the cost of fixing defects.

- Automated Regression Suite Expansion: As new features are developed, AI can automatically generate new tests to cover them and expand the regression suite, ensuring existing functionality remains intact without manual intervention.

AI-Powered Self-Healing Tests: The End of Flaky Tests

One of the biggest headaches in test automation is maintenance. UI changes, even minor ones, frequently break automated tests, leading to "flaky" tests that waste time and erode confidence. AI-powered self-healing tests address this by automatically detecting and adapting to changes in the Application Under Test (AUT), dramatically reducing maintenance overhead.

How it Works: A Technical Deep Dive

Self-healing tests don't just execute; they observe, learn, and adapt. The core process involves detecting changes, re-identifying elements, and then updating the test script's understanding of the application.

1. Change Detection: Spotting the Differences

The first step is to identify that something in the UI or underlying structure has changed.

- Visual AI/Computer Vision: AI models analyze screenshots or Document Object Model (DOM) snapshots of the application's UI before and after a change. They can detect visual differences, such as an element's relocation, a change in its size or color, or even subtle stylistic shifts. This is particularly useful for identifying changes that might not affect locators but impact user experience.

- DOM/Accessibility Tree Analysis: More technically, AI compares the structure of the DOM (the hierarchical representation of a web page) or the accessibility tree. It identifies changes in element attributes (e.g.,

id,classnames,XPath, CSS selectors), hierarchy (e.g., an element moved inside a different parentdiv), or text content.

2. Element Re-identification/Relocation: Finding the Lost Element

Once a change is detected, the critical task is to re-identify the "lost" element.

- Heuristic Algorithms: When an element's primary locator (ee.g.,

id="loginButton") changes, the system employs a series of fallback strategies. It might look for secondary locators that haven't changed (e.g.,class="btn-primary",text="Login"), or use contextual information like sibling elements (<input type="password">next to the button) or parent elements (<form id="loginForm">). Proximity to other stable elements is also a strong heuristic. - Machine Learning (ML) Models: More advanced self-healing systems leverage ML for robust element re-identification.

- Feature Vectors: Each UI element is represented as a rich feature vector. This vector encapsulates all its attributes (

id,class,name,type,text), visual characteristics (position, size, color), and contextual information (its parent, children, siblings, and their attributes). - Similarity Matching: ML models, such as Siamese networks or clustering algorithms, are trained to understand the "similarity" between elements. When an element's primary locator breaks, the model searches the new UI state for the element that is "most similar" to the original element based on its feature vector. This allows it to re-identify an element even if its

idchanges and it moves to a different part of the page, as long as enough other features remain consistent. - Predictive Models: Some cutting-edge systems learn patterns of change over time. If a certain type of button frequently changes its class name but always retains its text content, the model can learn to predict this pattern and prioritize text content for re-identification in future changes.

- Feature Vectors: Each UI element is represented as a rich feature vector. This vector encapsulates all its attributes (

3. Test Script Adaptation: Learning and Updating

The final step is to incorporate the re-identified element back into the test.

- Dynamic Locator Updates: Once an element is re-identified, the test script's locator strategy for that element is automatically updated. This update can be temporary (for the current test run) or persistent (saved back into the test suite, often after human review).

- Test Step Adjustment: In more complex scenarios, if a sequence of UI interactions changes (e.g., a button is moved to a different screen, or a new intermediate step is introduced, like a consent pop-up), AI might not just update a locator but suggest or even automatically insert/modify test steps to accommodate the change.

- Feedback Loop: Crucially, the self-healing process provides feedback. It logs which elements were re-identified, what changes occurred, and how the test adapted. This information is invaluable for developers and testers to understand the impact of their UI changes and decide if a permanent update to the test suite or even a design review is needed.

Practical Applications: The ROI of Resilience

- Reduced Test Maintenance: This is the most significant benefit. Teams spend drastically less time fixing broken tests, allowing them to focus on new feature development and exploratory testing.

- Increased Test Reliability: Tests become more resilient and less "flaky," leading to more trustworthy automation and more accurate feedback on code changes.

- Faster Feedback Loops: Developers receive quicker feedback on their changes without waiting for manual test script updates, accelerating the development cycle.

- Enabling Continuous Integration/Deployment (CI/CD): Self-healing tests are critical for maintaining a robust and fast CI/CD pipeline. They prevent test failures due to minor UI tweaks from blocking deployments, ensuring a smooth flow from code commit to production.

- Improved Tester Productivity: Testers are freed from the mundane task of fixing broken locators, allowing them to engage in higher-value activities like designing complex test scenarios, performing exploratory testing, and understanding user behavior.

Emerging Trends & The Integrated Future

The true power of AI in QA emerges when generative test case generation and self-healing tests are combined.

- Synergy and Integration: Imagine a system that not only generates new tests based on evolving requirements but also continuously monitors the AUT. If the AUT changes, the system's self-healing capabilities update existing tests. Furthermore, if the changes are significant enough, the generative AI might even suggest or create entirely new tests to cover the modified functionality or newly exposed paths. This creates a self-optimizing test ecosystem.

- Explainable AI (XAI) in QA: As AI takes on more critical roles, understanding why a particular test was generated (e.g., "This test was generated because the LLM identified a boundary condition in the quantity field") or how a test self-healed (e.g., "The 'Submit' button's ID changed, but it was re-identified by its text content and proximity to the 'Password' field") becomes crucial. XAI techniques will provide transparency, build trust, and aid in debugging and auditing.

- Low-Code/No-Code Test Automation: AI-powered generation and healing will further democratize test automation. Business analysts, product owners, and even non-technical users will be able to contribute more effectively to the testing process by describing requirements in natural language or validating visual changes, with AI handling the underlying test script creation and maintenance.

- Adaptive Test Prioritization: Beyond just generating tests, AI will learn to prioritize which tests to run based on factors like recent code changes, risk assessment of affected modules, historical defect data, and even real-time production telemetry. This optimizes testing cycles, ensuring the most critical tests are run first and frequently.

- AI for Performance and Security Testing: While the focus here is on functional tests, generative AI can also create diverse load profiles and user concurrency patterns for performance testing, or generate sophisticated, malicious input patterns (e.g., SQL injection, XSS payloads) for security testing, going beyond simple fuzzing.

Value for AI Practitioners and Enthusiasts

This field offers a fertile ground for AI practitioners and enthusiasts alike:

- Real-World Application of Cutting-Edge AI: This domain provides tangible, high-impact applications for LLMs, RL, GNNs, and computer vision. It's an opportunity to move beyond theoretical models and solve concrete, industry-wide problems that directly impact software quality and delivery speed.

- Interdisciplinary Challenge: It's a fascinating intersection of AI/ML, software engineering, quality assurance methodologies, and user experience design. Solving these problems requires a holistic understanding of software development.

- Data-Rich Environment: Software development generates vast amounts of data – code repositories, requirements documents, bug reports, application logs, test results, UI snapshots. This rich data environment is perfect for training, validating, and continuously improving AI models.

- Ethical Considerations: As AI takes over more critical functions in QA, practitioners must consider the ethical implications. This includes ensuring fairness and avoiding bias in AI-generated tests (e.g., not neglecting certain user groups), understanding the impact of over-reliance on automation, and maintaining human oversight.

- Contribution to Software Reliability: Developing these AI solutions directly contributes to building more reliable, robust, and higher-quality software systems. In an increasingly software-dependent world, this impact is profound and affects virtually every industry and aspect of daily life.

Conclusion

The journey towards fully autonomous and intelligent QA is well underway. AI-powered generative test case generation and self-healing tests are not just incremental improvements; they represent a paradigm shift. By automating the creation of comprehensive test suites and ensuring their resilience against application changes, these technologies are dismantling the traditional bottlenecks of QA. They are enabling true continuous testing, accelerating software delivery, and allowing human testers to elevate their role from manual execution and maintenance to strategic oversight, complex problem-solving, and innovative exploration. The future of software quality is intelligent, adaptive, and autonomous, and AI is the driving force behind this exciting evolution.