Multi-Modal Foundation Models: Unlocking the Next Era of AI Understanding

Discover how Multi-Modal Foundation Models are revolutionizing AI by enabling systems to understand, reason, and generate across diverse data types simultaneously, moving beyond siloed, single-modality approaches.

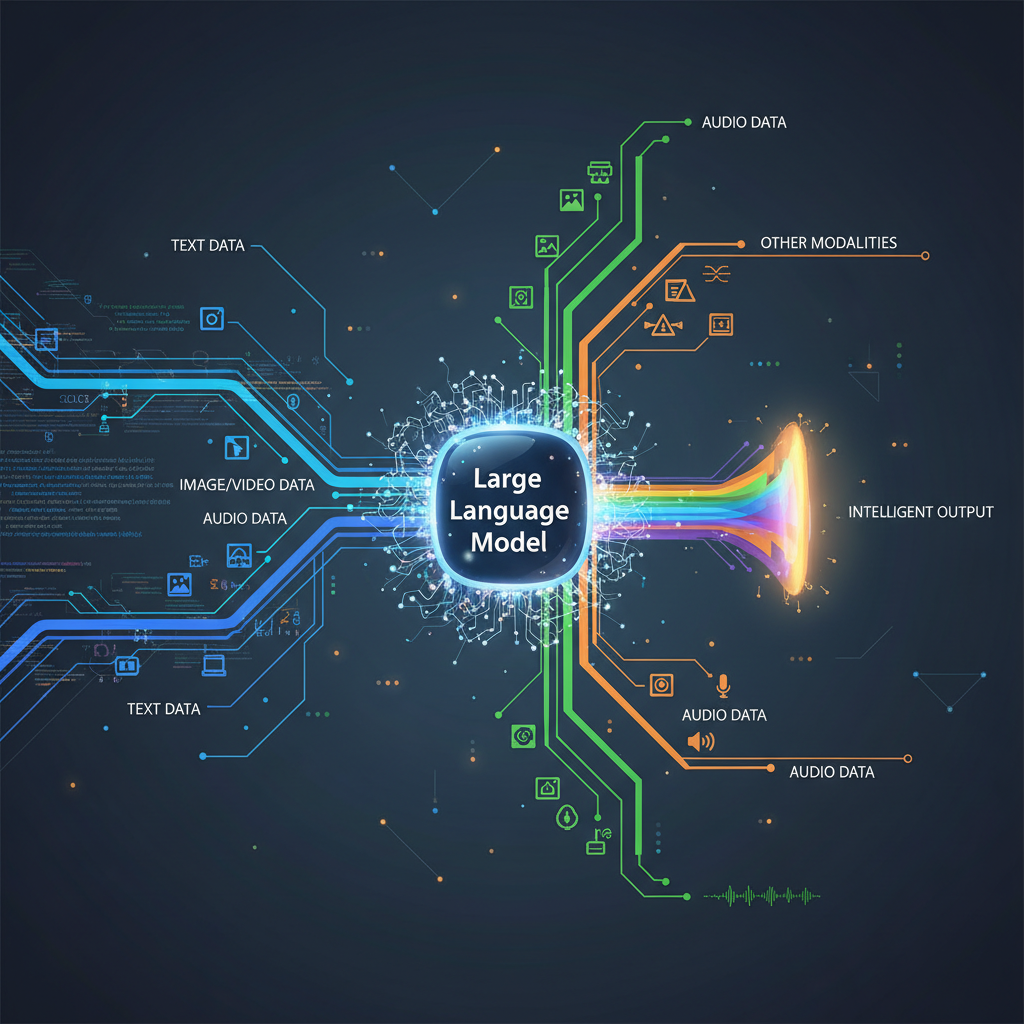

The landscape of artificial intelligence is undergoing a profound transformation. For decades, AI models excelled within the confines of single data types – text for natural language processing, images for computer vision, and audio for speech recognition. While these specialized systems achieved remarkable feats, they often operated in isolation, unable to bridge the semantic gaps between different modalities. This siloed approach limited their ability to grasp the richness and complexity of the real world, which is inherently multi-modal.

However, a new paradigm has emerged: Multi-Modal Foundation Models. These groundbreaking models are not just an incremental improvement; they represent a fundamental shift, enabling AI to understand, reason, and generate across diverse data types simultaneously. Building on the success of large language models (LLMs), which demonstrated the power of massive datasets and scalable architectures, multi-modal foundation models extend this concept to encompass text, images, audio, video, and even 3D data. This convergence is paving the way for AI systems that perceive and interact with the world in a manner far closer to human cognition, unlocking unprecedented capabilities and practical applications across every industry.

The Evolution from Unimodal to Multimodal AI

To appreciate the significance of multi-modal foundation models, it's crucial to understand the journey that led us here.

Unimodal AI: Specialized Excellence Early AI systems, and indeed many current production systems, are designed for specific modalities:

- Natural Language Processing (NLP): Models like BERT, GPT-3, and LLaMA excel at understanding, generating, and translating human language.

- Computer Vision (CV): Architectures like ResNet, YOLO, and Vision Transformers (ViT) are adept at image classification, object detection, and segmentation.

- Speech Recognition: Models like Wav2Vec and Whisper transform audio into text.

These models achieved state-of-the-art performance within their domains, but their knowledge was largely confined. An NLP model couldn't "see" an image, nor could a CV model "understand" a spoken command without an intermediary translation layer.

The Foundation Model Paradigm Shift The advent of large transformer-based models, particularly in NLP, demonstrated that training a single, massive model on a vast and diverse dataset could yield a "foundation" of knowledge. This foundation could then be adapted to a multitude of downstream tasks with minimal fine-tuning, a concept known as few-shot or zero-shot learning. This efficiency and generalization capability sparked the idea: what if we could apply this paradigm to multiple modalities?

Bridging the Modality Gap Multi-modal foundation models aim to create a shared latent space where information from different modalities can be represented and understood interchangeably. Imagine a model that can "see" a picture of a cat, "read" the word "cat," and "hear" the sound of a cat's meow, and understand that all three refer to the same underlying concept. This cross-modal understanding is achieved through various architectural innovations and training strategies.

Key Architectural and Training Innovations

The development of multi-modal foundation models relies on several sophisticated techniques:

1. Unified Architectures

Instead of simply concatenating outputs from unimodal models, multi-modal models often employ unified architectures designed to process diverse inputs natively.

- Shared Transformers: Many models extend the Transformer architecture to handle different input types. For instance, visual tokens (patches from an image) and text tokens can be fed into the same Transformer encoder, allowing for cross-attention mechanisms to learn relationships between them.

- Cross-Attention Mechanisms: These are critical for allowing different modalities to "attend" to each other. For example, in a Vision-Language Model (VLM), a text token might attend to relevant image regions, and vice versa.

- Modality-Specific Encoders + Shared Decoder: Some architectures use separate encoders for each modality (e.g., a Vision Transformer for images, a text Transformer for language) but then project their outputs into a shared embedding space, which is then fed into a common decoder for multi-modal generation or reasoning.

2. Massive and Diverse Datasets

The "foundation" aspect of these models hinges on gargantuan datasets. These datasets are not only large but also diverse, containing paired or weakly-paired data across modalities.

- Image-Text Pairs: Datasets like LAION-5B, Conceptual Captions, and COCO are crucial for training VLMs, providing millions or billions of images with corresponding text descriptions.

- Video-Text/Audio Pairs: Datasets containing videos with transcribed speech, captions, or descriptions are essential for video and audio-visual models.

- Web-Scale Data: Many models leverage the vastness of the internet, scraping publicly available data to find implicit connections between modalities.

3. Contrastive Learning

A common training objective, especially for aligning different modalities, is contrastive learning. Models like CLIP (Contrastive Language-Image Pre-training) learn to associate corresponding pairs (e.g., an image and its correct caption) by pushing their embeddings closer in the shared latent space, while simultaneously pushing apart non-corresponding pairs. This allows the model to learn robust representations that capture semantic similarity across modalities.

4. Instruction Tuning and Alignment

Similar to LLMs, multi-modal models benefit immensely from instruction tuning. By training on diverse tasks framed as instructions (e.g., "Describe this image," "Generate an image of X," "Answer the question about this video"), models learn to follow complex commands and generalize to new, unseen instructions. Reinforcement Learning from Human Feedback (RLHF) or similar alignment techniques are also employed to ensure the models' outputs are helpful, harmless, and aligned with human preferences.

Leading Multi-Modal Models and Their Capabilities

The field is rapidly evolving, with new models and capabilities emerging constantly. Here are some prominent examples:

-

Vision-Language Models (VLMs):

- DALL-E 2/3, Midjourney, Stable Diffusion, Imagen: These models excel at text-to-image generation, creating highly realistic and diverse images from textual prompts. DALL-E 3, integrated with ChatGPT, allows for more nuanced control and iterative refinement.

- CLIP (OpenAI): A foundational VLM that learns robust image and text representations, enabling zero-shot image classification and retrieval.

- BLIP-2 (Salesforce), LLaVA (Microsoft/UW-Madison), InstructBLIP (Salesforce): These models combine a vision encoder with an LLM, enabling visual question answering (VQA), detailed image captioning, and multi-turn conversations about images.

- Grounding DINO (Tsinghua/Meta): A powerful open-set object detector that can detect any object described in text, demonstrating the power of language to "ground" visual perception.

-

Audio-Visual Models:

- SeamlessM4T (Meta): A groundbreaking model for speech-to-speech and text-to-speech translation across nearly 100 languages, preserving speaker voice and emotion.

- Whisper (OpenAI): While primarily an audio-to-text model, its robust performance across languages and accents makes it a key component in many multi-modal pipelines.

-

Video Understanding and Generation:

- RunwayML's Gen-1/Gen-2, Google's Lumiere, OpenAI's Sora: These represent the cutting edge of text-to-video generation, producing surprisingly coherent and dynamic video clips from text prompts, hinting at a future where video creation is as easy as typing.

- Video-LLaMA, Video-ChatGPT: Extensions of VLMs to video, capable of answering questions about video content, summarizing events, and generating captions.

-

Unified Multi-Modal Models:

- Google Gemini: Designed from the ground up to be natively multi-modal, capable of understanding and operating across text, images, audio, and video inputs. Its "Ultra" version demonstrates advanced reasoning capabilities across these modalities.

Practical Applications and Use Cases

The implications of multi-modal foundation models are vast, touching nearly every sector.

1. Content Creation and Marketing

- Personalized Ads: Generating unique ad creatives (images, short videos) tailored to specific audience segments based on text descriptions.

- Rapid Prototyping: Designers can quickly generate multiple visual concepts for products, websites, or marketing campaigns from simple text prompts.

- Dynamic Storytelling: Creating interactive narratives where users' text inputs influence visual and audio elements.

- Automated Asset Generation: Generating game assets, architectural visualizations, or product mock-ups with textual specifications.

2. Accessibility

- Enhanced Image Descriptions: Automatically generating rich, detailed descriptions of images and videos for visually impaired users, going beyond simple object recognition to describe context and emotion.

- Real-time Sign Language Translation: Converting sign language video into spoken or written language, and vice-versa.

- Multi-lingual Communication: Seamless speech-to-speech translation for global communication, breaking down language barriers in real-time.

3. Education and Training

- Interactive Learning: Creating dynamic educational content where students can ask questions about images, diagrams, or videos using natural language, and receive contextually relevant answers.

- Personalized Tutoring: AI tutors that can analyze student's visual work (e.g., math problems written on a whiteboard) and provide verbal feedback or explanations.

- Virtual Labs: Simulating complex experiments with visual and textual instructions, allowing students to interact with virtual environments.

4. Healthcare and Medicine

- Diagnostic Assistance: Aiding radiologists by analyzing medical images (X-rays, MRIs) and generating descriptive reports or highlighting anomalies, potentially cross-referencing with patient history in text format.

- Patient Monitoring: Analyzing video feeds of patients (e.g., elderly care) for falls or unusual behavior, and generating alerts with visual context.

- Telemedicine: Enhancing virtual consultations by allowing doctors to analyze visual cues from patients' video feeds while processing their verbal descriptions of symptoms.

5. E-commerce and Retail

- Visual Search: Customers can upload an image of an item they like and find similar products, or describe an item in text and see generated product variations.

- Virtual Try-on: Generating realistic images or videos of clothing/accessories on a customer's uploaded photo or video.

- Automated Product Descriptions: Generating compelling product descriptions and marketing copy from product images and key features.

6. Robotics and Embodied AI

- Intuitive Human-Robot Interaction: Robots can understand complex natural language commands that refer to objects in their visual field (e.g., "Pick up the red mug on the table next to the laptop").

- Autonomous Navigation: Robots using visual, audio, and linguistic cues to navigate complex environments and avoid obstacles, understanding spoken warnings or signs.

- Complex Task Execution: Robots learning new tasks by observing human demonstrations (video) and receiving verbal instructions.

7. Data Analysis and Business Intelligence

- Social Media Analysis: Analyzing posts that combine images, videos, and text to gain deeper insights into public sentiment, brand perception, and emerging trends.

- Customer Feedback: Extracting insights from customer reviews that include product images or unboxing videos, not just text.

- Security and Surveillance: Detecting anomalies in video feeds (e.g., unauthorized access, suspicious packages) and generating detailed textual alerts, potentially integrating with audio analysis for unusual sounds.

Challenges and Ethical Considerations

Despite their immense potential, multi-modal foundation models present significant challenges:

- Computational Cost: Training and deploying these models require massive computational resources, limiting access and increasing environmental impact.

- Data Scarcity for Specific Modalities: While text and image data are abundant, high-quality, paired multi-modal datasets for less common modalities (e.g., tactile, olfactory) are scarce.

- Bias Amplification: If training data reflects societal biases, the models can perpetuate and even amplify these biases in their outputs (e.g., generating stereotypical images for certain professions).

- Misinformation and Deepfakes: The ability to generate highly realistic images, audio, and video raises concerns about the creation and spread of deceptive content.

- Intellectual Property: The use of vast amounts of internet data for training raises questions about copyright and fair use.

- Interpretability and Control: Understanding why a multi-modal model produces a certain output can be challenging, making it difficult to debug or ensure safety.

Addressing these challenges requires ongoing research, responsible development practices, robust ethical guidelines, and collaboration across academia, industry, and policy-makers.

The Road Ahead

The journey of multi-modal foundation models has only just begun. We are witnessing a rapid convergence of AI capabilities that promises to reshape how humans interact with technology and how technology interacts with the world. Future developments will likely focus on:

- Increased Efficiency: Developing more efficient architectures and training methods to reduce computational costs.

- Enhanced Reasoning: Improving the models' ability to perform complex, abstract reasoning across modalities, moving beyond mere pattern recognition.

- Personalization: Creating models that can adapt and learn from individual user preferences and contexts.

- Embodied Intelligence: Tighter integration with physical robots and real-world sensors to enable more sophisticated and adaptive embodied AI.

- Ethical AI: Robust frameworks for identifying and mitigating bias, ensuring fairness, transparency, and accountability.

Conclusion

Multi-modal foundation models are not merely an evolution; they are a revolution. By enabling AI to perceive, understand, and generate across diverse data types, they are pushing the boundaries of what's possible, moving us closer to truly intelligent systems that can engage with the world in a holistic, human-like manner. For AI practitioners, this means a new frontier of innovation, demanding a broader understanding of different data types and their intricate relationships. For enthusiasts, it offers a captivating glimpse into a future where AI systems are more intuitive, creative, and capable of solving complex, real-world problems. The rapid pace of advancements, coupled with the profound practical and ethical implications, makes this one of the most exciting and impactful areas in artificial intelligence today. The ability to bridge the sensory gaps in AI is not just a technical achievement; it's a step towards a more intelligent and interconnected future.